安装

conda activate ps

pip install visdom

激活ps的环境,在指定的ps环境中安装visdom

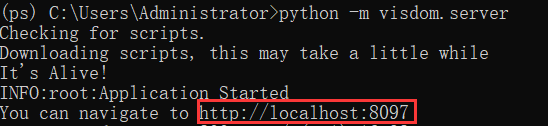

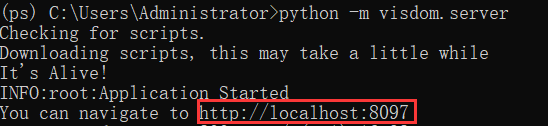

开启

浏览器输入红框内的网址

使用

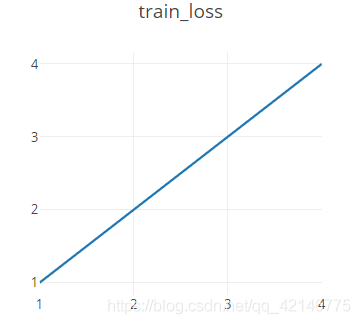

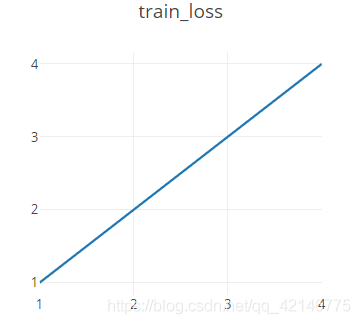

1. 简单示例:一条线

from visdom import Visdom

# 创建一个实例

viz=Visdom()

# 创建一个直线,再把最新数据添加到直线上

# y x二维两个轴,win 创建一个小窗口,不指定就默认为大窗口,opts其他信息比如名称

viz.line([1,2,3,4],[1,2,3,4],win="train_loss",opts=dict(title='train_loss'))

# 更一般的情况,因为下面y x数据不存在,只是示例

# append 添加到原来的后面,不然全部覆盖掉

# viz.line([loss.item()],[global_step],win="train_loss",update='append')

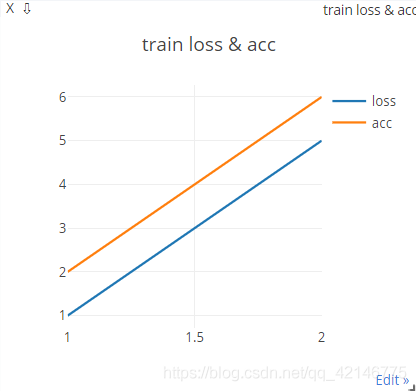

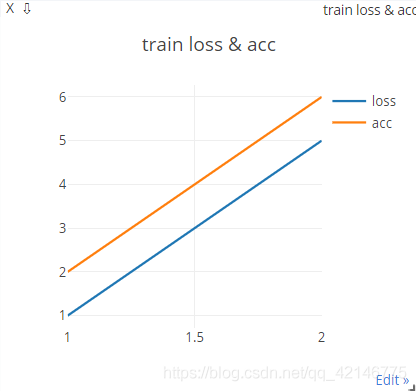

2. 简单示例:2条线

下面主要是[[y1],[y2]],[x] 两条映射,legend就是线条名称

from visdom import Visdom

viz=Visdom()

viz.line([[1,2],[5,6]],[1,2],win="loss_acc",opts=dict(title='train loss & acc',legend=['loss','acc']))

3. 显示图片

from visdom import Visdom

viz=Visdom()

# data 是一个batch

viz.image(data.view(-1,1,28,28),win='x')

viz.text(str(pred.datach().cpu().numpy()),win='pred',opts=dict(title='pred'))

4. 手写数字示例

动画效果图如下

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from visdom import Visdom

batch_size=200

learning_rate=0.01

epochs=10

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('../data', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

# transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('../data', train=False, transform=transforms.Compose([

transforms.ToTensor(),

# transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=batch_size, shuffle=True)

class MLP(nn.Module):

def __init__(self):

super(MLP, self).__init__()

self.model = nn.Sequential(

nn.Linear(784, 200),

nn.LeakyReLU(inplace=True),

nn.Linear(200, 200),

nn.LeakyReLU(inplace=True),

nn.Linear(200, 10),

nn.LeakyReLU(inplace=True),

)

def forward(self, x):

x = self.model(x)

return x

device = torch.device('cuda:0')

net = MLP().to(device)

optimizer = optim.SGD(net.parameters(), lr=learning_rate)

criteon = nn.CrossEntropyLoss().to(device)

viz = Visdom()

viz.line([0.], [0.], win='train_loss', opts=dict(title='train loss'))

viz.line([[0.0, 0.0]], [0.], win='test', opts=dict(title='test loss&acc.',

legend=['loss', 'acc.']))

global_step = 0

for epoch in range(epochs):

for batch_idx, (data, target) in enumerate(train_loader):

data = data.view(-1, 28*28)

data, target = data.to(device), target.cuda()

logits = net(data)

loss = criteon(logits, target)

optimizer.zero_grad()

loss.backward()

# print(w1.grad.norm(), w2.grad.norm())

optimizer.step()

global_step += 1

viz.line([loss.item()], [global_step], win='train_loss', update='append')

if batch_idx % 100 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

test_loss = 0

correct = 0

for data, target in test_loader:

data = data.view(-1, 28 * 28)

data, target = data.to(device), target.cuda()

logits = net(data)

test_loss += criteon(logits, target).item()

pred = logits.argmax(dim=1)

correct += pred.eq(target).float().sum().item()

viz.line([[test_loss, correct / len(test_loader.dataset)]],

[global_step], win='test', update='append')

viz.images(data.view(-1, 1, 28, 28), win='x')

viz.text(str(pred.detach().cpu().numpy()), win='pred',

opts=dict(title='pred'))

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

到此这篇关于pytorch visdom安装开启及使用方法的文章就介绍到这了,更多相关pytorch visdom使用内容请搜索站长博客以前的文章或继续浏览下面的相关文章希望大家以后多多支持站长博客!

jsjbwy