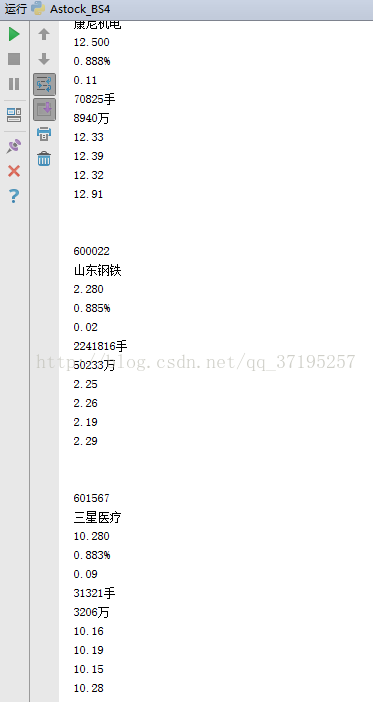

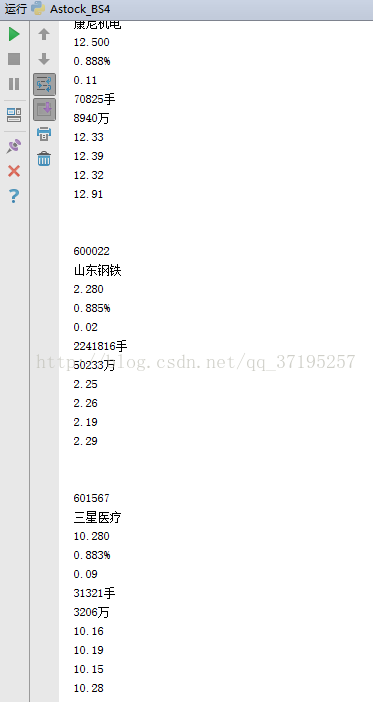

做了个爬虫自带抓取当日A股所有股票代码,名称,最新价,涨幅,涨价,成交量,成交额,今开盘,昨开盘,最低价,最高价,以供数据建模使用

采用IP代理,翻页随机迟滞。

本来想使用XPATH的,因为昨天装了XPATH HELPER,结果气死了,生成的XPATH路径都不对,手工校验下发现,与源码不符合,求助网络发现和标签的封闭格式有关系,所以又使用了BS4

采用了自我检验失败后重新执行的嵌套

同时刚学到,PYTHON的列表都是全局变量

# coding=utf-8

from bs4 import BeautifulSoup

import requests

import time

import random

from lxml import etree

def getpage(h1,pro,i):

url='http://app.finance.ifeng.com/list/stock.php?t=ha&f=chg_pct&o=desc&p='+ str(i)

try:

html=requests.get(url,timeout=16,headers=h1,proxies=pro)

html.encoding = "utf-8"

html = html.text

print ('抓到网页')

parse(html)

except:

print('again')

getpage(h1,pro,i)

def parse(html):

try:

soup = BeautifulSoup(html,'lxml')

con=soup.find_all('tr')

for item in con:

print item.get_text()

L1.append(item.get_text())

except:

print("重新解析")

parse(html)

print('程序开始执行,designed by luke')

h1={'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/50.0.2661.102 Safari/537.36'}

o_g=['101.67.63.200:80','219.145.43.211:80','27.184.57.108:8118','27.184.57.108:8118']

L1=[]

a=random.randint(0,3)

pro={'http': o_g[a]}

i=1

while i<15:

getpage(h1,pro,i)

i=i+1

print('下一页')

print('随机休眠N秒')

time.sleep(random.randint(0,10))

print('totally end')

print L1

cs